Project Overview

ISEB provides digital entrance examinations used by independent schools during their admissions process.

For more than eight years the digital platform itself had seen little structural or visual evolution. As new EdTech competitors entered the market with more modern tools.

This shift was reflected in declining subscription numbers, signalling an urgent need to reassess how the platform supported the wider examination process.

Problem

Applicants faced fragmented journeys across multiple organisations

Systems were limited and required manual coordination

Increased friction in a high-stress process

Solution

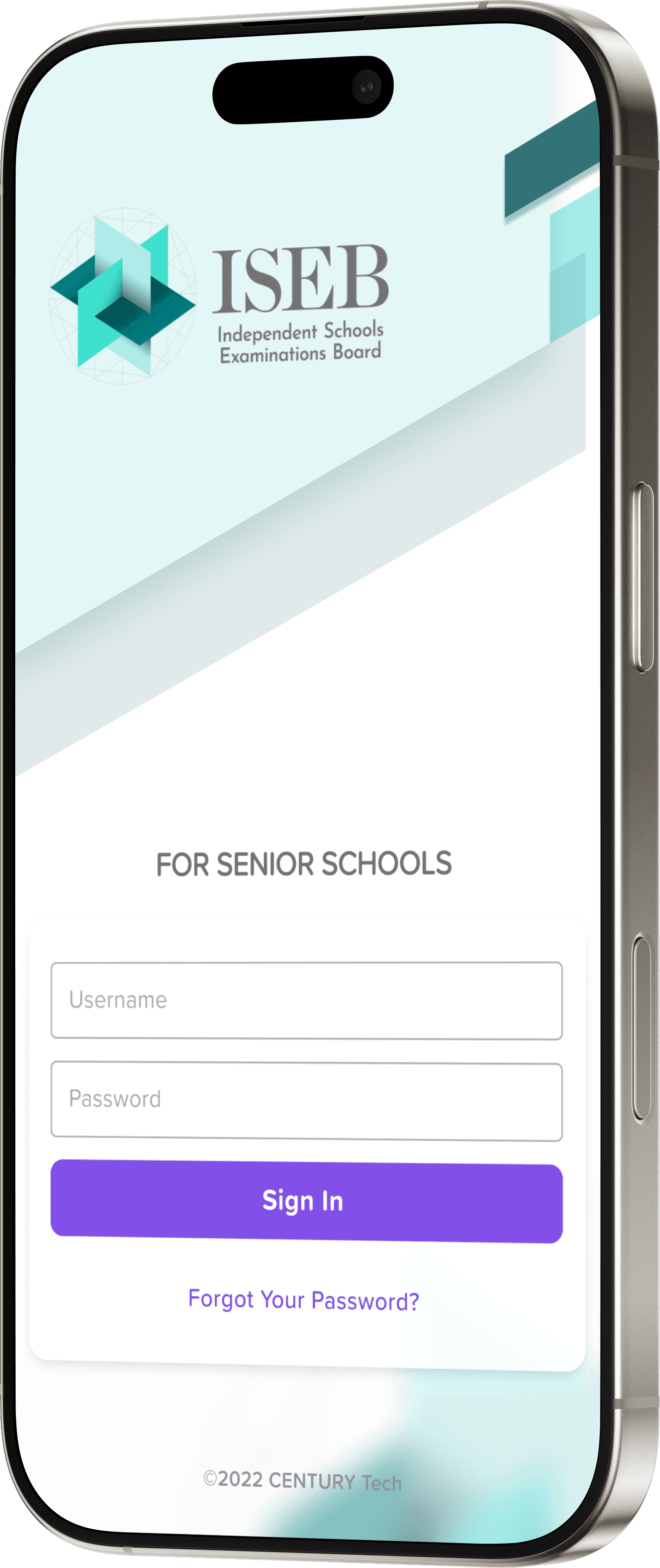

The proposed direction introduced role-based access for the key participants involved in the examination process — applicants, guardians, invigilation centres, senior schools and ISEB administrators.

Current Workflow

EXPECTED PLATFORM FLOW

Guardian registers applicant with Senior School

Assessment sitting arranged

Applicant completes assessment

Senior School reviews results

Admissions decision communicated

ACTUAL OPERATIONAL WORKFLOW

Communication between schools, invigilation centres and administrators relied heavily on manual processes, including email exchanges, spreadsheet exports and manual result declarations.

Research findings

Discovery research highlighted several structural challenges within the examination ecosystem.

Application Overlap

No tracking for single applicants multiple school applications.

Manual Processes

Manual applicant onboarded and exam administration via external invigilation centres.

Communication Gaps

Invigilator have no access to testing tools, requiring exam-related information to be exchanged through email or phone calls.

Operational Burden

Invigilation centres assign on average 1,5 staff members to manage examinations with no compensation.

Post-Exam Complexity

Test declarations submitted manually for each applicant per school via email.

High-Stress Environment

The high-stakes test cause pressure on applicants, leading to multiple health and administrative issues.

Multiple Test Attempts

Some students were able to take the same test multiple times by applying to different schools, bypassing limited tracking

Limited System Access

ISEB administrators had no interface to oversee the process, and guardians had no direct access to exam information.

Rethinking the Platform Infrastructure

Rather than simply improving the applicant interface, the project explored how the platform could better support the entire examination ecosystem.

This required shifting the platform from a single-user testing tool to a shared infrastructure supporting multiple organisations.

Expanding the system actors

The first step was recognising the full set of stakeholders involved in the admissions process, beyond applicants.

Mapping these roles revealed how responsibilities and communication flowed between organisations, highlighting opportunities to centralise key processes within the platform.

External

Guardian

Applicant

Institutional

Senior School

Invigilation centre

System

Admin

Temporary exam interface

(access via code)

Temporary exam interface

(access via code)

Guardian portal

Onboarding

Onboarding

Applicant Profile

core system record

Results

Senior School portal

Assessment

Special

requirements

Organisation

Onboarding

Assessment player

Onboarding

Scheduling

Invigilation portal

Organisation

onboarding

Admin

Introducing a shared applicant record

The platform architecture was restructured around a shared applicant profile and introduction of role-based permissions.

By introducing a shared data structure, the platform reduced duplication and enabled organisations to collaborate within a single system.

Supporting the full examination workflow

With the new platform infrastructure in place, the system could support the operational flow of examinations across institutions.

Guardians create an applicant profile and select an invigilation centre

Schools connect applicants to their organisation

Invigilation centres manage assessment scheduling and session logistics

Applicants access the assessment player with temporary assess code under invigilator supervision.

Following completion, invigilators submit assessment declarations

Results are automatically made available to schools through the platform

This structure allows the entire assessment lifecycle—from applicant registration to result distribution—to be coordinated within a unified system.